if you are thick provisioning the VMDK's, they will consume all of the space you've provisioned to them within the volume - this is one of the features of the NFS VAAI plugin.The warm cache extension enhancement provides increased sequential read performance for such environments, where there are very large amounts of deduplicated blocks"Īgain, am i missing something? This array was bought for VDI, at the moment im not seeing any real deduplication savings as each VM is using around 60GB. These zero blocks are all recognized as duplicates and are deduplicated very efficiently. Although it is applicable to many different environments, intelligent caching is particularly applicable to VM environments, where multiple blocks are set to zero as a result of system initialization. NetApp includes a performance enhancement referred to as intelligent cache. For these reasons NetApp does not recommend adding compression to an operating system VMDK Further, since compressed blocks bypass the Flash Cache card, compressing the operating system VMDK can negatively impact the performance during a boot storm. These VMDKs typically do not benefit from compression over what deduplication can already achieve.

Maximum savings can be achieved by keeping these in the same volume. "Operating system VMDKs deduplicate extremely well because the binary files, patches, and drivers are highly redundant between virtual machines (VMs). If 70GB of an 80GB disk (VM level) is unwritten space, then i thought that would at least dedupe? Some of the whitepapers suggest it should: I have run a reclaim space, i have sDeleted the template VM, all to try and zero out any unused space So writing random data and dedupe is freeing up more space than when i just dedupe on 5 identical VM's.

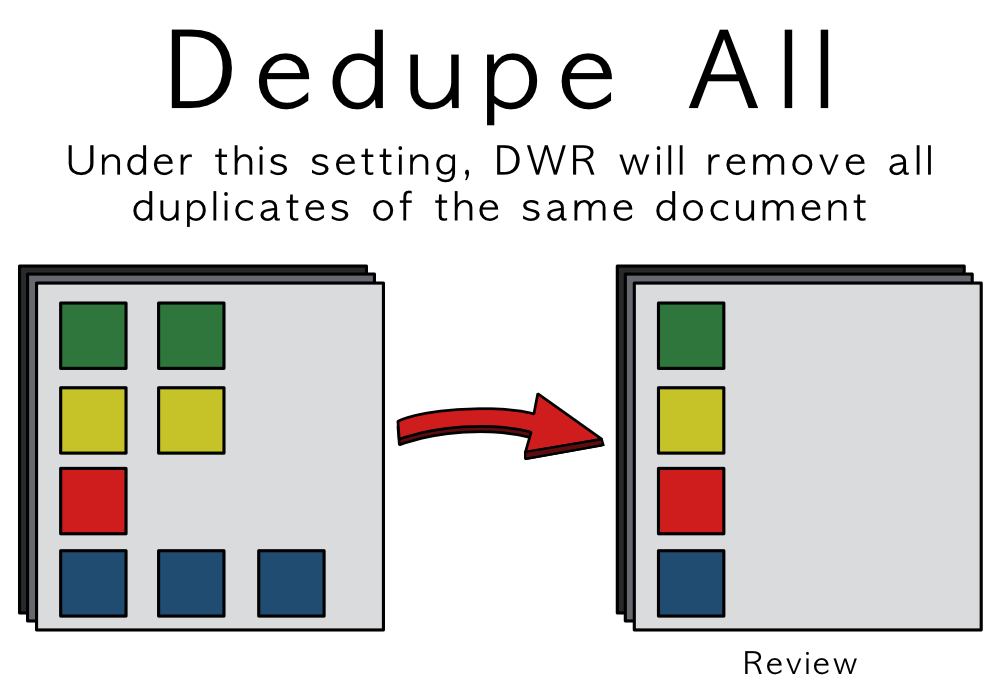

If i fill one of the hard disks up with data (roughly around 60GB), and then delete that data and rerun DeDupe, then weirdly it then shows an extra 30-40 GB available Space. Surely this isnt right when they are identical VM's?ģ00GB Used in total, when at the OS level only 10GB is used (50GB across 5x VM's) So according to the Oncommand Sys Manager Stats, the 5x VM's are using 60GB each after dedupe. Actual used space on the Volume is then 300GB. (Total: 400GB Allocated, of which the OS using 50GB).ĭedupe enabled and ran, saves me about 105GB. If this is all correct then is there a way i can report on thin provisioned values at the Volume level ? Unified Manager? Also, when a VM is deleted from a volume do i have to manually reclaim space or is there a process that will do this uatomatically?Īs above. I realise we are thick provisioning at VMWare level but i was told this would make no difference and the Array would handle the thin provisioning. I am safe to assume the Volume doesn't thin provision because we are thick provisioning in vSphere? If i look at the Aggregate then i can see the increase is minimal which suggests thin provisioning is working. If i look at the Volume it shows (before dedupe) that 400GB of the 600GB volume has been used. If i create 5x VMs based on the template (Total: 400GB Allocated, of which the OS using 50GB). Netapp has a 600GB NFS Volume - Thin Provisioned - Dedupe enabled. In vSphere we have a Win 7 Template (80GB Disk, only 10GB is actually in use). It is all setup and currently trying to evaluate space savings etc before we make the storage live and a few questions have come up that i was hoping for help with. Presented via 3 ESX 5.1 Hosts - all with NFS VAAI Plugin, VSC Installed. We have just configured an FAS2552 (2 Node), Clustered mode, using NFS

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed